The Solution to AI Cheating Is Within Our Grasp

We really *should* talk a lot more about watermarking.

My last post was more than four months ago — on September 11, 2025 — and in that post, I promised readers that I would be writing a series of posts on how digitization is changing work. Obviously, I didn’t write anything (though I did start on quite a few drafts); mostly, I got busy with teaching and disinclined to teach anything.

I am hoping to start writing again as the semester begins but rather than the promised series on digitization and work, I am planning to start by writing about some of the ways my course on “The Social Life of Computing” (which I teach every spring) has changed since I started teaching it back in 2017. I suspect that will align more with what I’m actually be spending a lot of time on in the next few months: teaching.

Before getting to the substantive changes in content, the biggest change I am making to all my courses is that they will all have in-class exams rather than analytical papers. This is a big change for me; I love the analytical paper since I was a TA back in grad school and I think it is one of the best assessments we have for courses in the humanities and social sciences. But rampant AI use means that the paper as an assessment just doesn’t work anymore, even with tweaks (or as my colleague Ari Edmundson aptly calls them, “epicycles”), and certainly not in large classes.

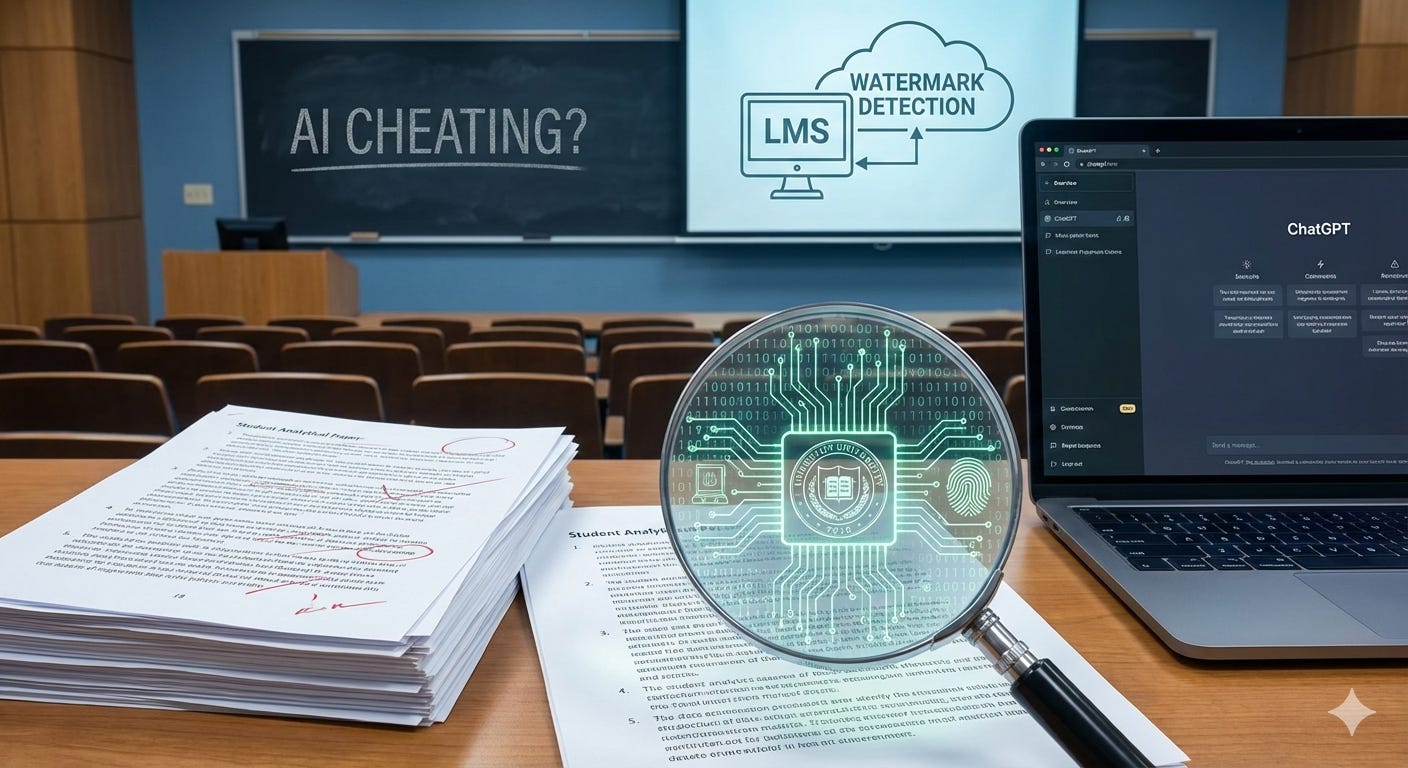

Just last week, the Chronicle of Higher Education published my op-ed on why universities should push more for the introduction of watermarking into generative AI programs that our students seem to use so much of. Please click on the link to read it at the Chronicle if you have a subscription. For those of you who don’t, a version of the article — a little different from the published version — is below.

*

The Solution to AI Cheating Is Within Our Grasp

Why aren’t we talking more about watermarking?

Last semester, I decided that I would no longer assign analytical papers in any of my large social science classes. This is a drastic change: my usual framework is that large 4-unit classes should have three papers, usually written in three different styles, due throughout the term. But there was no point anymore. When ChatGPT exploded onto the scene in 2022, I was reasonably confident that my prompts were LLM-proof. I have modified them since then to make them even more so. But I have started to realize that such modification is an exercise in futility that wasn’t doing much for anyone, least of all students. The papers submitted this semester were mostly poor quality; they also all sounded alike because LLM use was rampant; and the teaching team found it increasingly hard to assess if a student even knew anything even if they were increasingly using words of four syllables or more (“legitimacy,” anyone?).

So, going forward, I — like many other instructors all across the country — decided there would be no papers, just in-class (handwritten) exams, with group projects and presentations in the viva voce format. This is not a decision I am happy with. I think the analytical paper is an important tool for assessing learning; it allows students to demonstrate complicated learning outcomes involving understanding and assimilation that exams, with their limited time and high stress, just cannot accommodate.

It did not have to be this way. We did not need to give up the analytical paper as an assessment tool, as I and many of my colleagues are now doing.

All we really need is a framework and technology that allows instructors to reliably detect whether a student has used an LLM. This does not require broad agreement on contested questions; we do not need to agree on whether LLMs will cause mass unemployment or more productivity; whether they are racist, sexist, ableist, or extractivist, or whether Silicon Valley is an agent of freedom or inequality.

If we could reliably detect AI usage in student papers, that would leave instructors free to decide whether AI use impedes or enhances their learning goals. Those of us who believe that LLMs and their easy availability actively impede teaching students how to write can forbid AI usage—and punish students who use it—without worrying about accidentally penalizing innocent students. Those of us who are open to having students use LLMs can encourage them to use it and design assignments accordingly.

A technique to reliably detect AI use exists: it is called “watermarking.” It is not a magic solution and would, indeed, require collective action from universities. But a world where students would not use LLMs for writing assignments (unless instructors specifically asked them to) is well within the realm of possibility.

*

Let’s backtrack. In August 2024, Deepa Seetharaman and Matt Barnum wrote a detailed piece in The Wall Street Journal about a method researchers at OpenAI have been working on to help detect whether a text was produced by ChatGPT. No, this was not yet another classifier trained on AI-produced text. Rather, this technology would modify the output of the ChatGPT software itself. At its heart, ChatGPT is a prediction machine that produces text by probabilistically calculating the next word or sentence, what is called a “token.” As the Journal describes it, “OpenAI would slightly change how the tokens are selected… leav[ing] a pattern called a watermark.” Such watermarks, not visible to the regular ChatGPT user, would nevertheless be detected by special programs that knew the watermark in advance, thereby helping third parties reliably identify LLM usage.

In other words, this technology modifies AI-produced text to create a “fingerprint” alongside a “detector” that can identify it. A watermark is like a trademark printed in invisible ink, a serial number on a gun, or the license plate of a car. The watermark can simply indicate that the text is machine-generated, or it could be much more detailed in identifying provenance.

Obviously, watermarks involve a burden on the AI company. OpenAI, in this case, needs to modify its LLM model since the watermark is literally hidden in the text produced by the model. There is a chance this will degrade the quality of the output. But the Journal assures us that, according to OpenAI’s own internal surveys, this was not the case. “People familiar with the matter” told the Journal that “when OpenAI conducted a test,” they “found watermarking didn’t impair ChatGPT’s performance.”

So why is watermarking not deployed more? The problem was that when OpenAI surveyed its users, “nearly 30%” made it clear that should OpenAI implement watermarking, they would immediately decamp to a “rival [who] didn’t.”

The classic answer to this problem, from the point of view of economics and public policy, is regulation that forces all companies to take action so that no single company suffers a competitive disadvantage.

The problem of discouraging students from using LLMs seems tractable. It could be solved through cooperation and enforcement between LLM companies, Learning Management Systems (LMSes, e.g., Canvas), universities, and the government. All LLM platforms would be required to watermark their text; they would be forced to build tools that integrate with LMSes so those watermarks can be detected in student submissions. Instructors could then use the watermark detector to figure out whether students have used LLMs and take action.

Would it work at scale? It would require some work to think through “globalized tampering,” as OpenAI itself argued in a blogpost from 2024. For instance, students could take the output from one model, translate it into another language, and then retranslate it back, or run it through another LLM. It was “trivial to circumvention by bad actors.”

This is a serious point. It’s important to remember that this kind of watermarking will only work if there is collective action, meaning it must be adopted by all AI platforms, or at least the most important ones. Otherwise, a student could just take text from one program, run it through another, and remove the watermark.

This sort of watermarking— what some computer scientists have called “LLM text watermarking”—can and should be implemented, by government fiat if necessary. Enforcing this on AI providers will take time and effort, because AI providers themselves have something to lose in this arrangement — though laws like this are already on the books in the EU and China.

*

In a position paper on arXiv, computer scientists Yepeng Liu, Xuandong Zhao, Dawn Song, Gregory W. Wornell, and Yuheng Bu argue that LLM text watermarking would work best for purposes that matter much more for AI companies, such as “automatically filter[ing] out texts generated by their models when collecting training data” to train their next round of LLMs. It may be less effective for tracking student usage because there will always be rogue LLM models that do not implement LLM text watermarking. True, these rogue LLMs could be hunted and shut down. But this is harder than it seems; all you have to do is put your server in Russia.

It might still work as an academic integrity tool however, and universities should pursue this option resolutely. That’s because while the researchers and LLM companies are worried about “bad actors” — determined, unscrupulous people who will go to great lengths to circumvent these restrictions — most students who use LLMs are hardly that. They use LLMs mostly to save time and cut corners as they face an assignment deadline that they started on too late. They are unlikely to have either the inclination or the knowhow to get around these technological guardrails.

But LLM text watermarking is not the only kind of watermarking. Another kind is what the computer scientists call “in-context watermarking” (ICW). The effectiveness of ICW depends on the LLM’s “powerful in-context learning and instruction-following abilities,” which are only increasing with time.

Here’s how ICW would work: when instructors generate an assignment prompt, a program in the LMS would subtly change the text of the prompt. When students put this prompt directly into an LLM interface, the output would then contain a watermark, which can then be detected when the student submits the paper. ICW could theoretically operate without collaboration from LLM providers, although their cooperation would make the system even more effective. But the important players here would be the universities and LMS providers.

Universities could band together, force both LMS providers and AI companies to the negotiating table, and figure out ways to implement ICW to detect academic misconduct. If watermarking standards can be worked out, this benefits not just universities and instructors but AI companies as well. Instructors, once convinced that they can safely monitor whether students are using AI, are then free to construct assignments that encourage students to use AI. AI companies are free to innovate on new products for teaching contexts.

*

Why do we not discuss these solutions more on teaching listservs, or in the endless op-eds about what AI means for college education? Our discussions stay in a kind of box. Some instructors and educators oppose generative AI for political reasons (Silicon Valley, profit-seeking, environmental and energy impacts, what have you). Others urge instructors to modify their assignments to make them AI proof: more specific, more project-like, about teaching the process rather than the product.

But I have not yet seen a discussion of watermarking among instructors or universities (computer science articles notwithstanding).

The biggest problem, I suspect, is that it is too technical and does not easily map onto issues of pedagogy or the university’s mission, issues that instructors often feel most comfortable discussing. It also does not map clearly to politics; in fact, it is a politically agnostic solution that offers a clear path for action. It is agnostic with regard to pedagogy as well: instructors get to decide whether to let students use AI and are free to create assignments that work for their learning goals. Perhaps the silence is because watermarking is a technology of policing, and instructors—most of whom identify as progressives—are uncomfortable focusing on that aspect of their teaching. But even here, instructors have agency in deciding whether they want to use watermarking and they are free to use the metrics to allocate punishments in accordance with their own beliefs.

We shouldn’t let the perfect be the enemy of the good. For better or worse, LLMs are out there for all of us to use. And the analytical paper is too foundational to abandon for the security of the timed, in-class exam. We can continue to have our debates about how best to teach and the university’s mission and about what AI means. But in the meantime, we must figure out a way to reliably detect LLM output and save our students from themselves.

Love the article, it's a unique solution even if we can still debate whether it's fully the right one. So many of us, including myself, are ready to throw in the towel. That said I think you're on the right track here.

The main problem I see is the coordination problem, particularly with respect to university administrations who are conflict averse and frankly don't care that much about academic integrity.

I saw a bunch of discussion of watermarking back in 2023 (before ordinary writing professors were discussing much about LLMs) but I think it died out because open source models that wouldn’t follow the guidelines would be too easy to come by (even if LLaMa and Deepseek cooperated, it wouldn’t be hard for some unscrupulous company to jailbreak one of them).

I’ve been working with some people here at Irvine to see if we can get an old computer lab designated as a “writing lab” that would be proctored and open 12 hours a day, where students would have computers with access to all their class materials, but no AI, so that writing assignments could require being written there.